Having spent more than 25 years in the contact center industry – much of it focused on advancing Voice AI – part of me is genuinely pleased to see CX AI finally receiving the attention it deserves from enterprises, the market, and investors alike.

At the same time, I’m increasingly concerned.

The market is now crowded with slick, well-funded GenAI orchestration platforms that perform impressively in demos, yet consistently fall short when deployed in real, high-volume production environments. And when they fail, it’s enterprises – and their customers – who absorb the cost.

For years, winning enterprise deals depended on the quality of the demo – not just how polished it looked, but whether it demonstrated a real understanding of operational complexity and a credible path to ROI at scale. A good demo used to signal implementation competence.

That signal has been badly diluted.

Today, it’s trivially easy to assemble a visually impressive demo by stitching together best-of-breed Speech-to-Text (STT), Natural Language Understanding (NLU), Text-to-Speech (TTS) and leaning heavily on Large Language Models (LLMs). Handling a single, scripted call in isolation is no longer a meaningful test. It proves almost nothing about whether a solution can survive real-world conditions.

And reality is unforgiving.

There is no such thing as a free lunch: over the years, Omilia has repeatedly won enterprise customers who initially attempted to build on hyperscaler tooling – only to spend 18–24 months navigating integration friction, unpredictable costs, and performance constraints before abandoning the effort and coming to us for an out-of-the-box solution. One that delivers the entire Voice AI stack – natively integrated, operationally proven, and designed to scale.

Our 99% gross retention rate reflects that reality.

I see the same pattern emerging again with today’s GenAI “wunderkinds.” Many are undeniably talented. Few, however, have spent meaningful time inside a contact center – or lived through the failure modes that only surface at scale. Market research helps, but it doesn’t replace having built and operated over a hundred contact centers in real production environments. Some lessons are only learned the hard way.

Which brings me to the core issue buyers should be asking in 2026: can this Voice AI solution scale – reliably, predictably, and economically?

In a hyper-competitive digital economy, CX AI must do more than work. It must scale to extreme volumes while delivering consistent, low-latency, human-like experiences – even under stress.

At Omilia, efficiency isn’t a slogan; it’s structural. As a fast-growing, profitable company that closed 2025 with well over $50M in live ARR, we serve some of the largest enterprises in the world – including Capital One, Marriott, PSEG, DWP, and Allstate. Our cloud platform regularly handles over 10,000 concurrent voice calls within a single customer environment – heavy voice traffic, not just lightweight chat sessions – representing millions of interactions per day.

Many vendors like to talk about “latency at scale.” Very few publish end-to-end data. Fewer still are precise about what they’re measuring – often conflating chat concurrency with voice, where the technical challenges are fundamentally different.

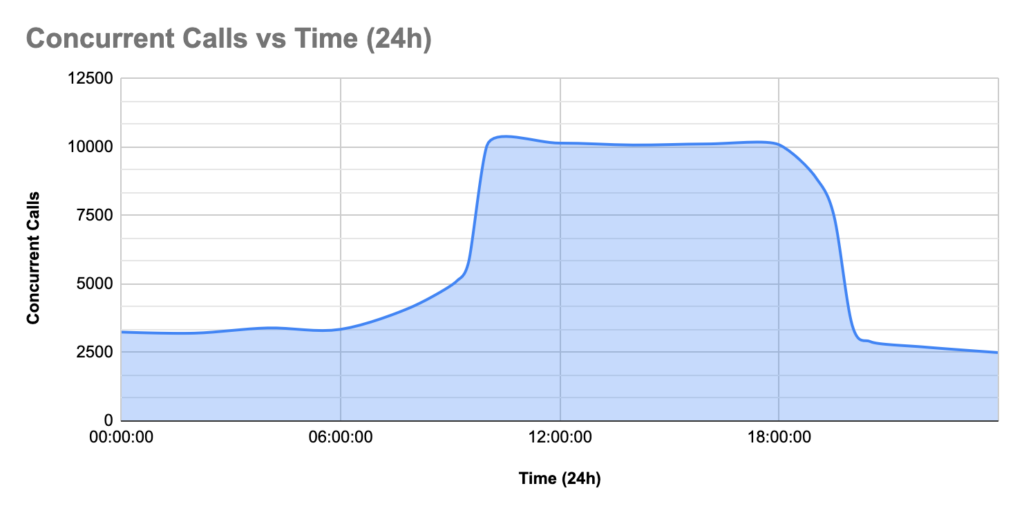

We are explicit. Omilia sustains 10,000+ concurrent voice calls with no latency degradation, no per-call cost inflation, and no architectural gymnastics. The system auto-scales in real time, provisioning capacity seamlessly across multiple regions as demand spikes – without operational intervention. The graph below displays a recent real-life case of a spike in voice call concurrency to +10k concurrent calls for one of our enterprise customers. Our system handles it effortlessly, with autoscaling that provisions additional resources to accommodate the increased load.

For customers such as PSEG, NiSource, Capital One, RBC, Allstate, and Marriott – all subject to extreme and unpredictable volume swings – this isn’t a “nice to have.” It’s existential.

We can only deliver this because we own the entire Voice AI stack. Unlike orchestration-only platforms that externalize cost through bring-your-own LLMs and rely on a cascade of third-party components at every stage – introducing compounding latency and fragility at scale – Omilia controls STT, ASR, TTS, LLMs, and orchestration as a single, coherent, end-to-end system.

Unlimited scale. Zero latency impact. One price.

Enterprise AI isn’t about impressive demos. It’s about delivering – every hour of every day – under real load. For buyers navigating today’s noise, the only reliable guideposts left are customer references, deep industry experience, and a truly voice-native stack.

It’s easy to promise the world.

At Omilia, we prefer to show what we’ve already delivered.

About the Author

Dimitris Vassos, CEO of Omilia

Over 20 years of experience in customer care automation. He began his career at IBM UK in 1997, contributing to global voice product rollouts in more than 70 countries. In 2002, he founded Omilia with a mission to reinvent customer service. Today, he’s one of the most experienced professionals in applied speech and NLP industry today, having led 150+ large-scale Conversational AI projects across 17 countries.